Oct 28, 2020 How to improve docker build time by leveraging different caching strategies while using Docker in Docker. Illustrated using GitLab CI and Kubernetes. Or compile the dependencies into a Python. Docker images for CI where you need lots of Python versions and tools.

Updated in 2021

At CALLR, we have been using GitLab for quite a while. We are also using more and more Docker containers. In this post, I’ll show you how we build docker images with a simple .gitlab-ci.yml file.

Let’s not waste any time.

Here is a .gitlab-ci.yml file that you can drop in directly without any modification in a project with a working Dockerfile.

It will:

- build a docker image for each git commit, tagging the docker image with the commit SHA

- tag the docker image “latest” for the “master” branch

- keep in sync git tags with docker tags

All docker images will be pushed to the GitLab Container Registry.

Do not use “latest” nor “stable” images when using a CI. Why? Because you want reproducibility. You want your pipeline to work in 6 month. Or 6 years. Latest images will break things. Always target a version. Of course, the same applies to the base image in your Dockerfile. Hence

image: docker:20here.To speed-up your docker builds, pull the “latest” image (

$CI_REGISTRY_IMAGE:latest) before building , and then build with--cache-from $CI_REGISTRY_IMAGE:latest. This will make sure docker has the latest image and can leverage layer caching. Chances are you did not change all layers, so the build process will be very fast.When building, use

--pullto always attempt to pull a newer version of the image. Because you are targeting a specific version, this makes sure you have the latest (security) updates of that version.In the push jobs, tell GitLab not to clone the source code with

GIT_STRATEGY: none. Since we are just playing with docker pull/push, we do not need the source code. This will speed things up as well.Finally, keep your Git tags in sync with your Docker tags. If you have not automated this, you have probably found yourself in the situation of wondering “which git tag is this image again?”. No more. Use GitLab “tags” pipelines.

- Building Docker images with GitLab CI/CD from GitLab

In the previous post I described how to run own GitLab server with CI runner.In this one, I’m going to walk through my experience of configuring GitLab-CI for one of my projects.I faced few problems during this process, which I will highlight in this post.

Some words about the project:

- Python/Flask backend with PostgreSQL as a database, with the bunch of unittests.

- React/Reflux in frontend with Webpack for bundling. No JS tests.

- Frontend and backend are bundled in a single docker container and deployed to Kubernetes

Building the first container

First thing after importing repository into gitlab we need to create .gitlab-ci.yml.Gitlab itself has a lot of useful information about CI configuration,for example this andthis.

Previously I used to do following steps to test/compile/deploy manually:

- npm run build - to build all js/css

- build.sh - to build docker container

- docker-compose -f docker-compose-test.yml run –rm api-test nosetests

- deploy.sh - to deploy it

Everything is pretty common for most of the web projects.So, I started with a simple .gitlab-ci.yml file, trying to build my python code:

$CI_BUILD_TOKEN- is a password for my docker registry, saved in projects variables in GitLab project settings

And it actually worked fine. I got my docker container built and pushed to the registry.Next step was to be able to run tests. This is where things became not so obvious.For testing, we need to add another stage to our gitlab-ci file.

Testing. First try

After reading posts like thisI thought that it will be as easy as declaring service and adding test stage:

But it didn’t work. As far as I understood it, it’s because of docker-in-docker runner.Basically, we have 2 containers - docker:latest and postgresql:9.5, and they are linked perfectly fine.But then we’re bootstrapping our own container, inside this docker container.And this container can’t use docker links to access postgres, because its outside of its scope.

Testing. Second try

Then I tried to use my container as and image for the test stage and service declared in test stage itself, like this:

But it also didn’t work because of authentication.

My docker registry needs authentication which I do in before_script stage. But before_script is being called after the services.I assume that this method should work for public images.

Testing. Third try. Kinda working solution

.gitlab-ci.yml Docker Python

So I decided to try to use docker-compose in tests as I was doing manually since.Docker-compose should be able to run and link everything together.And since this page in documentation says:

GitLab CI allows you to use Docker Engine to build and test docker-based projects.

This also allows to you to use docker-compose and other docker-enabled tools.

I was very confused when I was not able to use docker-compose, since docker:latest image has no docker-compose installed.I spent some time googling and trying to install compose inside the container,and ended up using image jonaskello/docker-and-compose instead of the recommended one.

So my test stage changed to this:

This actually worked, but from time to time I was seeing weird race conditions during database provisioning.It’s not a big problem, and could be fixed easily. But I decided to try one more approach.

Gitlab Ci Python Docker Tutorial

Testing. Final version

This time I decided to run postgres container during the test stage and link it to my test container.This requires to provide additional configuration to postgres container, but still this way is the closest to the original services approach.

Now, when we have our python container built and tested we need to add one more thing.We need to compile our javascript and css and put it into release container.This stage is actually going before the build.To be able to use files between stages we need to use some cache.In my case we need to cache only one directory.The one where webpack produces compiled files - src/static/dist.

Cache is described in more details in official docs

As for release stage I’m simply going to tag container with build number and push it back to the registry.

Gitlab Ci Python Docker

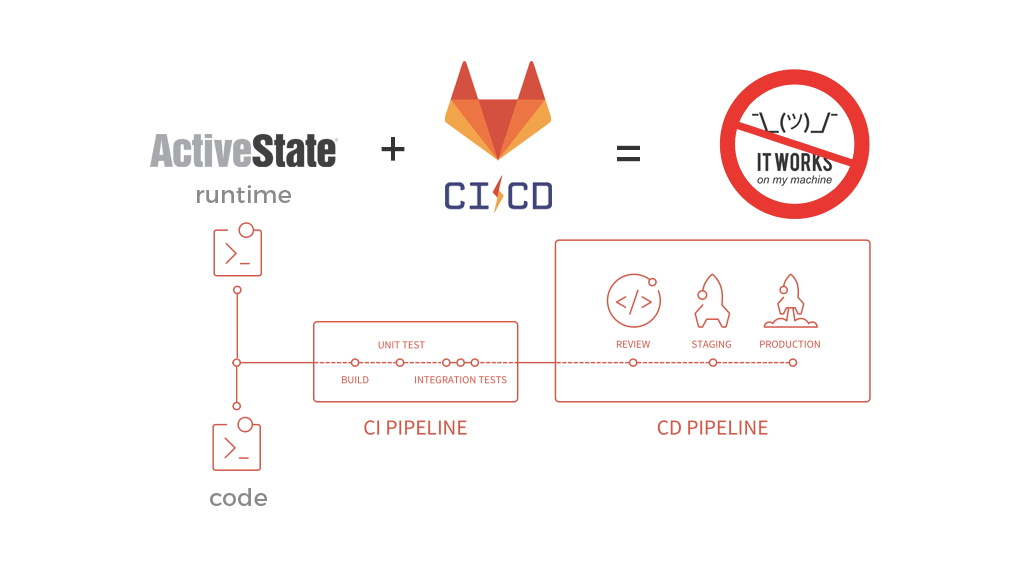

Now we have a working CI pipeline which can build a container, run tests against this container and then push it to the registry.So far so good. The only problem is that all of this takes very long time. For my project, it takes about 20 minutes to finish all these stages.Most of the time is spent on building docker layers, downloading python and npm packages and installing it.Next post will probably be about reducing this time by using some local caching services.